About

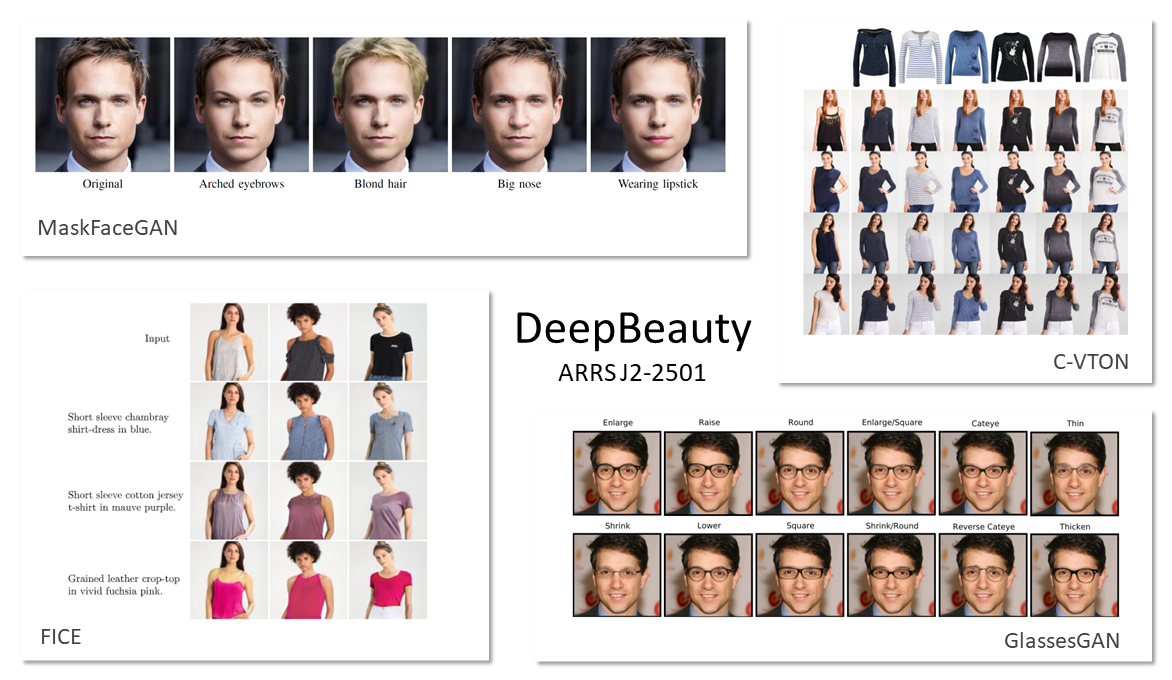

The Deep generative models for beauty and fashion (DeepBeauty) project aims to contribute to research on image generation and editing technology with a particular focus on deep learning methodologies, which have recently been shown to be a highly convenient and effective tool for this task. The goal of the project is to develop novel (flexible and robust) mechanisms for image editing tailored towards the needs of the beauty and fashion industries, capable of altering certain parts of the input images in accordance with predefined target appearances (e.g., an example of specific makeup, an image of a model wearing a fashion item, clothing or accessory). The main tangible result of the project is expected to be novel and highly robust virtual try-on technology based on original approaches to face and body editing. The developed technology will be able to edit images in a photo-realistic manner, while preserving the overall visual appearance of the subjects in the images.

DeepBeauty (ARRS: J2-2501 (A)) is a fundamental research project funded by the Slovenian Research Agency (ARRS) in in the period: 1.9.2020 – 28.2.2024 (1,8 FTE per year).

The Principal Investigator (PI) of DeepBeauty is Prof. Vitomir Štruc, PhD.

Link to SICRIS: Follow me.

Project overview

DeepBeauty is structured into 6 work packages:

- WP1: Coordination and management

- WP2: Latent space exploration

- WP3: Face editing for beauty and fashion

- WP4: Virtual try-on

- WP5: Demo room and exploitation

- WP6: Dissemination

The R&D work on these work packages is expected to result in:

- A better understanding of the latent space of modern generative deep learning models

- Flexible and photo-realistic face editing technology

- Flexible and photo-realistic body editing technology

Project phases

- Year 1: Activities on work packages WP1, WP2, WP3, WP4, WP6

- Year 2: Activities on work packages WP1, WP2, WP3, WP4, WP6

- Year 3: Activities on work packages WP1, WP2, WP3, WP4, WP5, WP6

Partners

DeepBeauty is conducted jointly by:

- The Laboratory for Machine Intelligence (LMI), Faculty of Electrical Engineering, University of Ljubljana

- The Computer Vision Laboratory (CVL), Faculty of Computer and Information Science, University of Ljubljana

- Alpineon Ltd.

- The Computer Vision Research Laboratory

Participating researchers

- Prof. Vitomir Štruc, PhD – Project Leader (PI)

- Assoc. Prof. Simon Dobrišek, PhD, Researcher LMI

- Assoc. Prof. Janez Perš, PhD, Researcher LMI

- Ass. Janez Križaj, PhD, Resercher LMI

- Ass. Klemen Grm, Researcher LMI

- Ass. Martin Pernuš, Researcher LMI

- Ass. Marija Ivanovska, Researcher LMI

- Peter Rot, Researcher LMI

- Benjamin Fele, Researcher LMI

- Prof. Peter Peer, PhD, PI at CVL

- Prof. Franc Solina, PhD, Researcher CVL

- Ass. Prof. Žiga Emeršič, Researcher CVL

- Ass. Blaž Meden, Researcher CVL

- Matej Vitek, Researcher CVL

- Ajda Lampe, Researcher at CVL and LMI

- Jerneja Žganec Gros, PhD, PI at Alpineon

- Jaka Kravanja, PhD, Researcher Alpineon

International Advisory Committee

- Prof. Clinton Fookes, PhD, Queensland University of Technology, Australia

- Assoc. Prof. Hugo Pedro Proenca, Universidade da Beira Interior, Portugal

- Assoc. Prof. Vishal Patel, John Hopkins University

- Assoc. Prof. Walter Scheirer, PhD, University of Notre Dame, USA

Project publications

- Ajda Lampe, Julija Stopar, Deepak Jain, Shinichiro Omachi, Peter Peer, Vitomir Štruc: DiCTI: Diffusion-based Clothing Designer via Text-guided Input, Workshop on Synthetic Data for Face and Gesture Analysis, FG 2024, pp. 1-9, 2024 [PDF]

- Janez Križaj; Richard O. Plesh; Mahesh Banavar; Stephanie Schuckers; Vitomir Štruc: Deep Face Decoder: Towards understanding the embedding space of convolutional networks through visual reconstruction of deep face templates, Engineering Applications of Artificial Intelligence, vol. 132, 107941, pp. 1-20, 2024 [PDF]

- Martin Pernuš, Vitomir Štruc, Simon Dobrišek, MaskFaceGAN: High Resolution Face Editing with Masked GAN Latent Code Optimization, IEEE Transactions on Image Processing, vol. 32, 2023 [PDF][IEEE Xplore][GitHub page]

- Martin Pernuš; Mansi Bhatnagar; Badr Samad; Divyanshu Singh; Peter Peer; Vitomir Štruc; Simon Dobrišek: ChildNet: Structural Kinship Face Synthesis Model With Appearance Control Mechanisms, IEEE Access, pp. 1-22, 2023 [PDF]

- Martin Pernuš, Clinton Fookes, Vitomir Štruc, Simon Dobrišek, FICE: Text-Conditioned Fashion Image Editing with Guided GAN Inversion, arXiv preprint, 2022. [PDF][GitHub page]

- Richard Plesh, Peter Peer, Vitomir Štruc, GlassesGAN: Eyewear Personalization using Synthetic Appearance Discovery and Targeted Subspace Modeling, Computer Vision and Pattern Recognition (CVPR), 2023 [PDF][GitHub page]

- Julijan Jug; Ajda Lampe; Vitomir Štruc; Peter Peer, Body Segmentation Using Multi-task Learning, International Conference on Artificial Intelligence in Information and Communication (ICAIIC), pp. 1-7, 2022 [PDF]

- Julijan Jug, Ajda Lampe, Peter Peer, Vitomir Štruc. Segmentacija telesa z uporabo večciljnega učenja, ROSUS, 2022 [PDF]

- Darian Tomašević, Peter Peer, Vitomir Štruc, BiOcularGAN: Bimodal Synthesis and Annotation of Ocular Images, IEEE/IAPR International Joint Conference on Biometrics (IJCB), 2022 [PDF][GitHub project]

- Benjamin Fele; Ajda Lampe; Peter Peer; Vitomir Štruc, C-VTON: Context-Driven Image-Based Virtual Try-On Network, IEEE/CVF Winter Applications in Computer Vision (WACV), pp. 1–10, 2022 [PDF][GitHub project]

Open Source Code

- GlassesGAN [GitHub]

- MaskFaceGAN [GitHub]

- BiOcularGAN [GitHub]

- C-VTON [GitHub]

- FICE [GitHub]

- ChildNET [GitHub]

Patents

- Martin Pernuš, Vitomir Štruc, Simon Dobrišek, Tomaž Černe, Jerneja Žganec-Gros, Postopek za napredno urejanje lastnosti obraza v digitalnih slikah, Patent application (approved), 2021 [PDF]

Master Theses

- Julija Stopar, Fashion Image Editing Through Text Descriptions, Master Thesis, Supervisors: Vitomir Štruc and Shinichiro Omachi, 2023 [PDF]

- Andraž Puc, Virtualno pomerjanje frizur z uporabo generativnih nevronskih modelov, Master Thesis, Supervisor: Vitomir Štruc, 2022 [PDF]

- Darian Tomašević. Generating ocular images with deep generative models, Master Thesis, Supervisors: Peter Peer and Vitomir Štruc, 2022 [PDF]

- Julijan Jug, Body segmentation using multi-task learning, Master thesis, Co-supervisors: Peter Peer and Vitomir Štruc, 2021 [PDF]

Bachelor Theses

- Luka Zornada, Razvoj aplikacije za virtualno pomerjanje ličil in preurejanje obrazov, Bachelor Thesis, Supervisor: Vitomir Štruc, 2022 [PDF]

- Marija Jakimovska, Analiza modelov za preurejanje obraznih slik v lepotni industriji, Bachelor Thesis, Supervisor: Vitomir Štruc, 2022 [PDF]

Invited Talks

- Vitomir Štruc. Generative models in computer vision : from fashion and beauty to biometrics, Keynote, 1st Workshop on Interdisciplinary Applications of Biometrics and Identity Science, Waikoloa Beach, Hawaii, 5.1. 2023.

- Vitomir Štruc, Generative models in computer vision : from fashion and beauty to biometrics, Queensland University of Technology, December 2022.

- Vitomir Štruc, Computer vision for fashion and beauty, Keynote talk, Huawei Future Device Technology Summit, Helsinki, Finland, 8-9.11.2022. [PDF]

- Vitomir Štruc, Generative models in computer vision and biometrics, Lecture at the EURASIP JIVP Webinar, online, 6.10.2022 [video]

- Vitomir Štruc, Generative models in computer vision and biometrics, Lecture at Istanbul Technical University, Istanbul, Turkey, 21. 9. 2022.

- Vitomir Štruc, Photorealistic face editing via latent code optimization, Keynote talk, Workshop Digital Face Manipulation & Detection, organized by European Association for Biometrics (EAB), online, 12. 7. 2022

- Vitomir Štruc. Generative models in computer vision and biometrics, Lecture at the University of Salzburg, Salzburg, Austria, 14. 6. 2022.

- Vitomir Štruc, Generative models in biometrics, Talk at the Trustworthy Biometrics Webinar, IEEE Biometrics Beijing Chapter, online, 6. 5. 2022 [PDF]

- Vitomir Štruc, Generative Models for Computer Vision, Keynote Talk, Sixth IAPR International Conference on Computer Vision & Image Processing (CVIP2021), Punjab, India [PDF slides]

- Peter Peer. From ear recognition to deidentification and virtual try on, Lecture at Faculdade de Engenharia, Universidade do Porto, September 29, 2021.

Awards

- Darian Tomašević, Prešeren Award of the Faculty of Computer and Information Science, FRI UL, 2023

- Benjamin Fele and Ajda Lampe, Award and recognition for research work conducted by PhD students for their WACV 2022 paper, Faculty of Computer and Information Science, UL, 2022.

Funding agency